Category: Generative AI

-

On Hinton’s argument for superhuman AI.

[ad_1] May 30, 2023 · AGI opinion Last week in Cambridge was Hinton bonanza. He visited the university town where he was once an undergraduate in experimental psychology, and gave a series of back-to-back talks, Q&A sessions, interviews, dinners, etc. He was stopped on the street by random passers-by who recognised him from the lecture,…

-

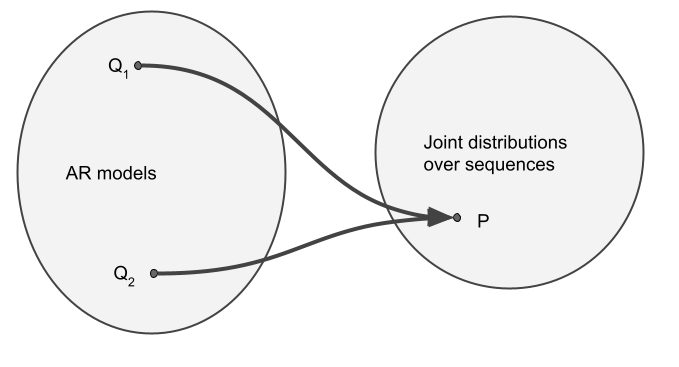

Autoregressive Models, OOD Prompts and the Interpolation Regime

[ad_1] March 30, 2023 A few years ago I was very much into maximum likelihood-based generative modeling and autoregressive models (see this, this or this). More recently, my focus shifted to characterising inductive biases of gradient-based optimization focussing mostly on supervised learning. I only very recently started combining the two ideas, revisiting autoregressive models throuh…

-

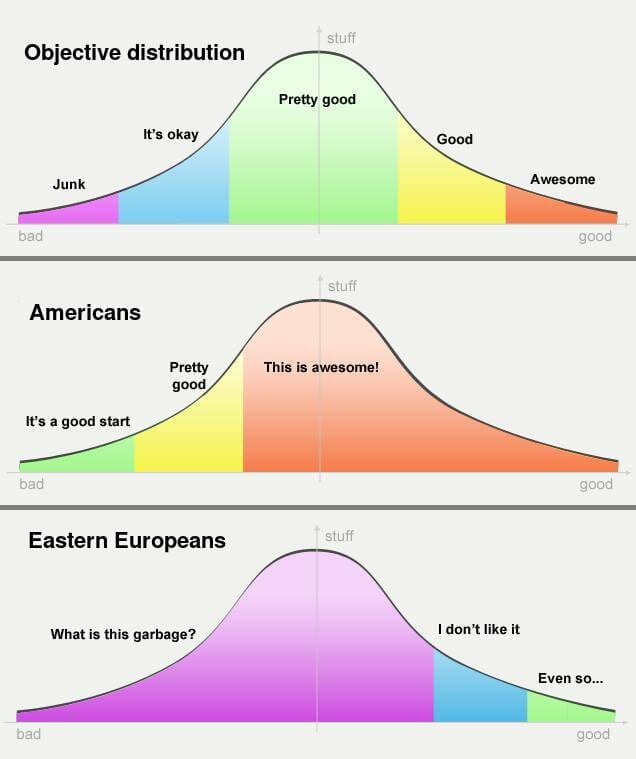

Eastern European Guide to Writing Reference Letters

[ad_1] February 28, 2022 Excruciating. One phrase I often use to describe what it’s like to read reference letters for Eastern European applicants to PhD and Master’s programs in Cambridge. Even objectively outstanding students often receive dull, short, factual, almost negative-sounding reference letters. This is a result of (A) cultural differences – we are very…

-

Understanding Convolutions on Graphs

[ad_1] Contents This article is one of two Distill publications about graph neural networks. Take a look at A Gentle Introduction to Graph Neural Networks for a companion view on many things graph and neural network related. Many systems and interactions – social networks, molecules, organizations, citations, physical models, transactions – can be represented quite…

-

Distill Hiatus

[ad_1] Over the past five years, Distill has supported authors in publishing artifacts that push beyond the traditional expectations of scientific papers. From Gabriel Goh’s interactive exposition of momentum, to an ongoing collaboration exploring self-organizing systems, to a community discussion of a highly debated paper, Distill has been a venue for authors to experiment in…

-

Branch Specialization

[ad_1] This article is part of the Circuits thread, an experimental format collecting invited short articles and critical commentary delving into the inner workings of neural networks. Visualizing Weights Weight Banding Introduction If we think of interpretability as a kind of “anatomy of neural networks,” most of the circuits thread has involved studying tiny little…

-

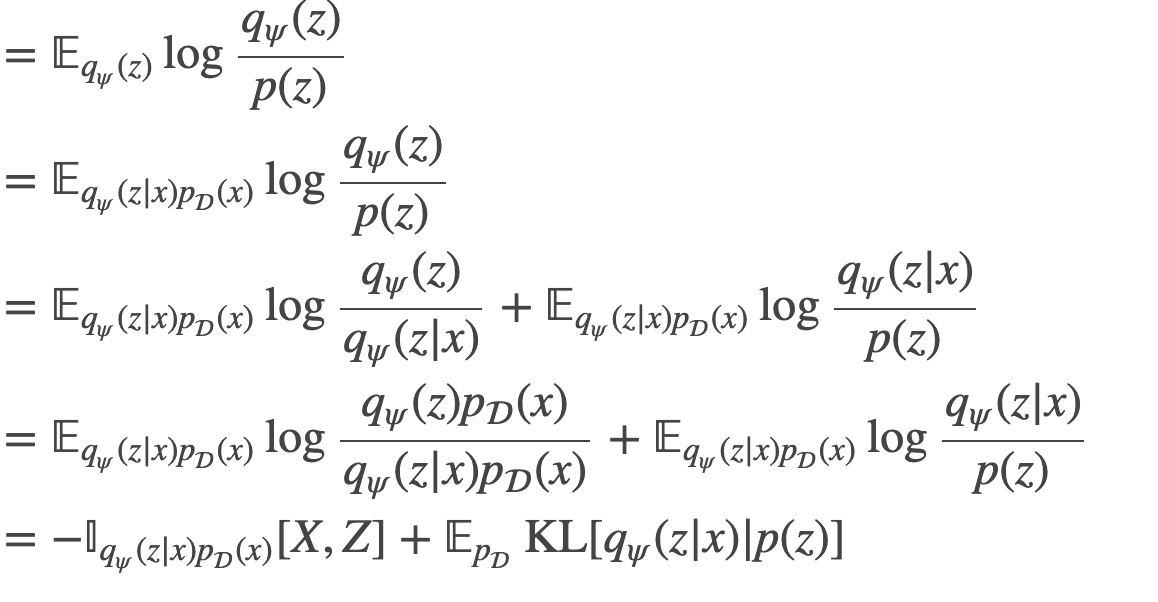

An information maximization view on the $\beta$-VAE objective

[ad_1] guest post with Dóra Jámbor This is a half-guest-post written jointly with Dóra, a fellow participant in a reading group where we recently discussed the original paper on $beta$-VAEs: On the surface of it, $beta$-VAEs are a straightforward extension of VAEs where we are allowed to directly control the tradeoff between the reconstruction and…